this post was submitted on 03 Jan 2024

451 points (100.0% liked)

196

18577 readers

537 users here now

Be sure to follow the rule before you head out.

Rule: You must post before you leave.

Other rules

Behavior rules:

- No bigotry (transphobia, racism, etc…)

- No genocide denial

- No support for authoritarian behaviour (incl. Tankies)

- No namecalling

- Accounts from lemmygrad.ml, threads.net, or hexbear.net are held to higher standards

- Other things seen as cleary bad

Posting rules:

- No AI generated content (DALL-E etc…)

- No advertisements

- No gore / violence

- Mutual aid posts are not allowed

NSFW: NSFW content is permitted but it must be tagged and have content warnings. Anything that doesn't adhere to this will be removed. Content warnings should be added like: [penis], [explicit description of sex]. Non-sexualized breasts of any gender are not considered inappropriate and therefore do not need to be blurred/tagged.

If you have any questions, feel free to contact us on our matrix channel or email.

Other 196's:

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

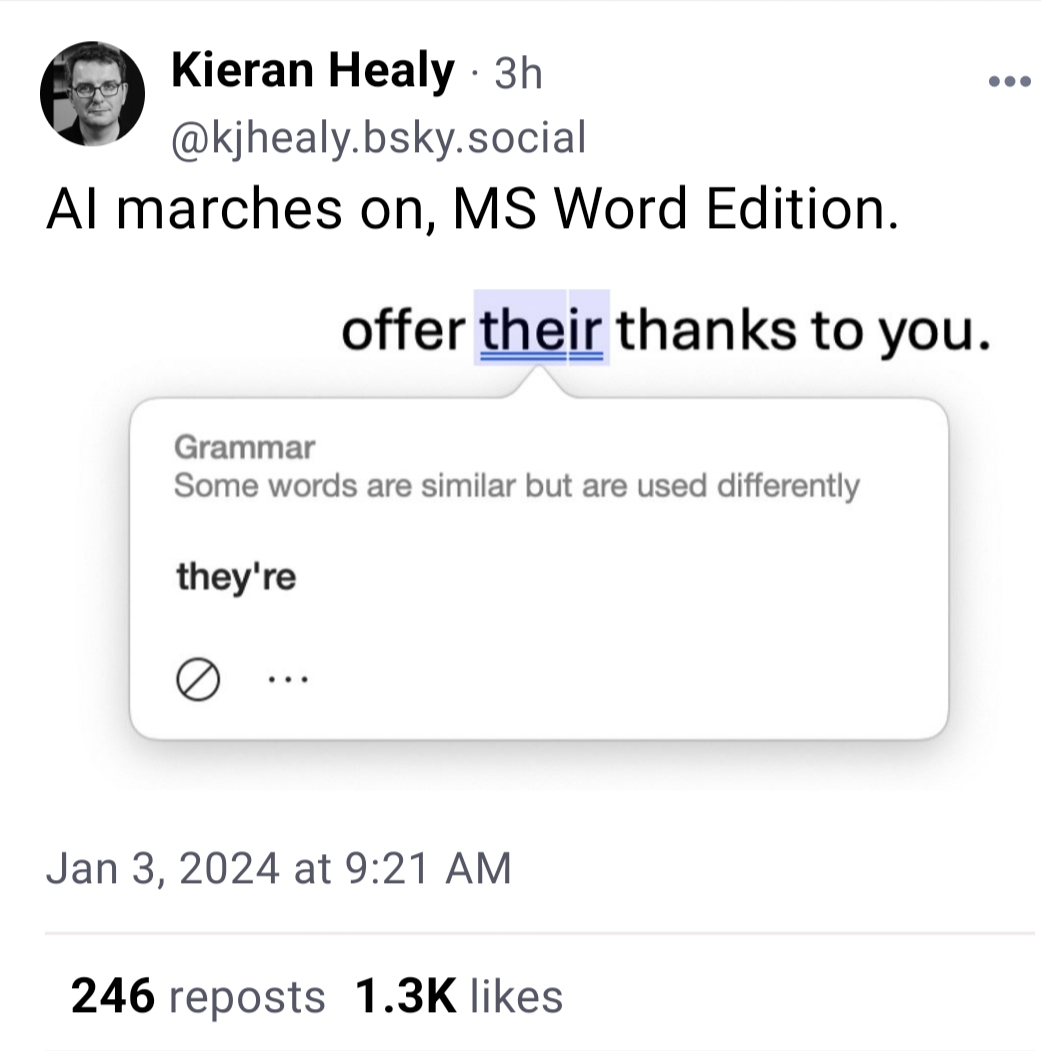

I've seen this with gpt4. If I ask it to proofread text with errors it consistently does a great job, but if I prompt it to proofread a text without errors, it hallucinates them. It's funny to see Microsoft having the same issue.

I'm pretty sure MS uses GPT-4 as the foundation of all their AI stuff, so it's not surprising to see them have the same issues. Funny, as you said, but not surprising.