I feel like we need to talk about Lemmy's massive tankie censorship problem. A lot of popular lemmy communities are hosted on lemmy.ml. It's been well known for a while that the admins/mods of that instance have, let's say, rather extremist and onesided political views. In short, they're what's colloquially referred to as tankies. This wouldn't be much of an issue if they didn't regularly abuse their admin/mod status to censor and silence people who dissent with their political beliefs and for example, post things critical of China, Russia, the USSR, socialism, ...

As an example, there was a thread today about the anniversary of the Tiananmen Massacre. When I was reading it, there were mostly posts critical of China in the thread and some whataboutist/denialist replies critical of the USA and the west. In terms of votes, the posts critical of China were definitely getting the most support.

I posted a comment in this thread linking to "https://archive.ph/2020.07.12-074312/https://imgur.com/a/AIIbbPs" (WARNING: graphical content), which describes aspects of the atrocities that aren't widely known even in the West, and supporting evidence. My comment was promptly removed for violating the "Be nice and civil" rule. When I looked back at the thread, I noticed that all posts critical of China had been removed while the whataboutist and denialist comments were left in place.

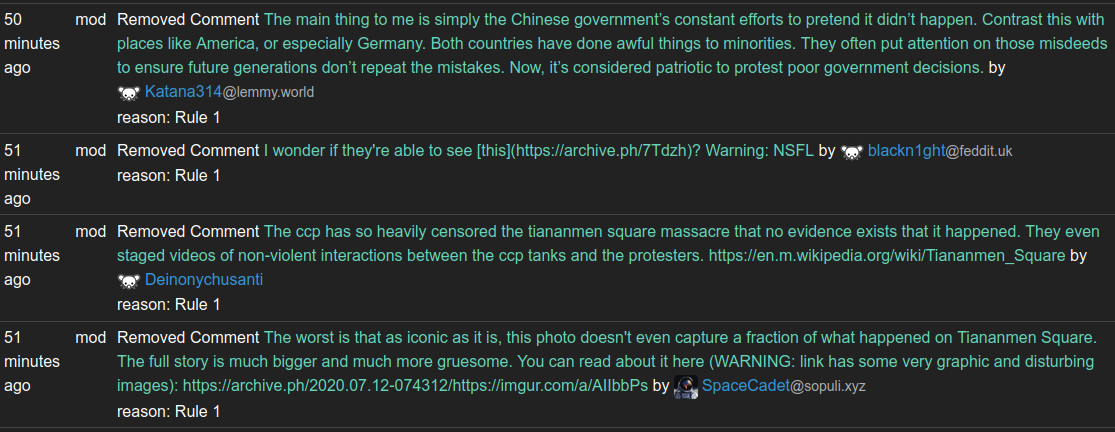

This is what the modlog of the instance looks like:

Definitely a trend there wouldn't you say?

When I called them out on their one sided censorship, with a screenshot of the modlog above, I promptly received a community ban on all communities on lemmy.ml that I had ever participated in.

Proof:

So many of you will now probably think something like: "So what, it's the fediverse, you can use another instance."

The problem with this reasoning is that many of the popular communities are actually on lemmy.ml, and they're not so easy to replace. I mean, in terms of content and engagement lemmy is already a pretty small place as it is. So it's rather pointless sitting for example in /c/linux@some.random.other.instance.world where there's nobody to discuss anything with.

I'm not sure if there's a solution here, but I'd like to urge people to avoid lemmy.ml hosted communities in favor of communities on more reasonable instances.

US defaultism much?

This is absolutely not a thing where I live and it sounds quite entitled to expect this level of personal service from an underpaid and overworked worker who's probably already overbooked and struggling to finish his round on time.

Here a delivery driver will come to the street facing door of a building, and attempt to deliver with you in person, or if you live up high you can buzz him in to put the package in the shared entrance space, but he's not going to go on a lone quest to gain access to every single private multitenant building. You're not home, and haven't given permission to deliver to your neighbors? Tough shit. Come pick up the package at the depot.